October 25, 2015

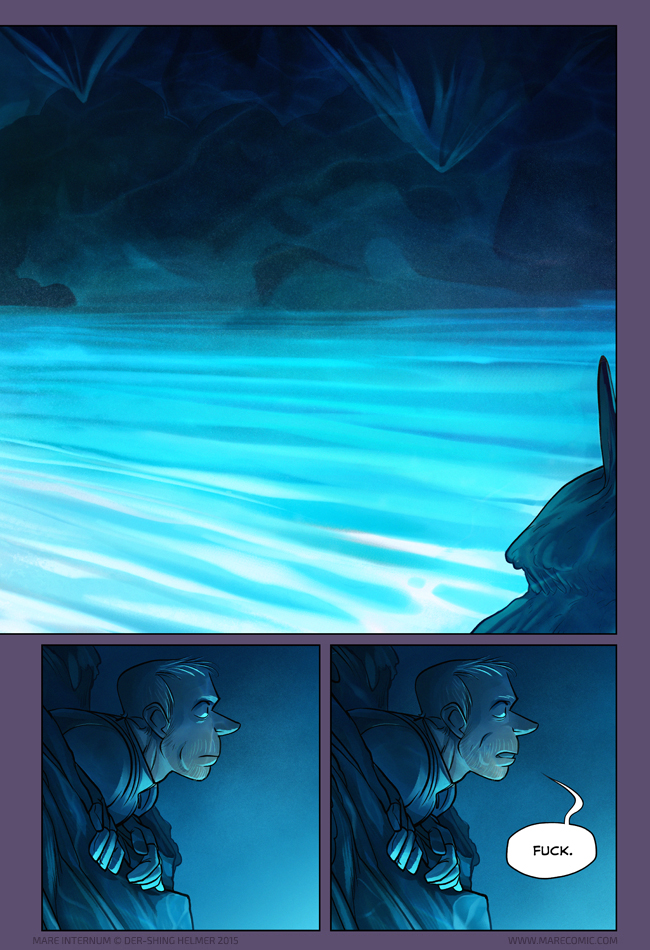

“It was a real ocean, with the capricious contours of the coastlines of the surface. It was empty, though, and looked horribly wild.”

Both pages together here.

Full size of the spread image available here for $5+ Patrons.

Today’s bonus art: a few of several scrapped concepts.

66 Comments

Welp, looks like Mike totally isn’t boned anymore.

Also! I love the way you’ve done the water reflections on the surface of the cavern. It’s a small thing but damn, it looks good.

Same, Mike. Same XD

Also, the blueish atmosphere in combination with the profile shots somehow makes this a bit reminiscent of the very first couple of pages in the comic (for me at least).

And also also, this is a really gorgeous spread!!

Ohohoho XD yeah, that was one of the primary design choices of the prologue. Good job noticing!

What’s with all the funky alien pool lighting?:) Probably some kind of bioluminescence, right?

IDK! I hope we find out

I bet it’s a sweet led strip light setup.

Thighfriend is all dressed up for clubbing! Everything makes sense now!

Interesting that the water level seemed to change because there are a lot of details on the same height on the structures on the left.

I noticed that too. Some wonderful detail around indications of changing sea levels and the lighting from the luminescence in the ocean on the rocks is amazing.

I wonder if those are stalagmites on the previous page or some other kinds of Mars life.

For that matter, is that Mike poking his head out in the bottom left of the top panel in the previous page?

My first thought is that the level lowers every time there’s a breach to the surface.

It’s possible one of the 2 marks was the level of water before that event a decade ago.

I wonder what effects with mike&Bex’s big puncture will have on that, tho of course it won’t show there yet.

Stop complaining, Mike, and enjoy the pretty colours.

Not sure that he’s complaining, probably just at a loss for words. I know a few people that, if overwhelmed with the beauty of say the Sistine Chapel or the Grand Canyon would resort to things like “Holy shit.” regardless of how appropriate it is.

Maybe he’s complaining that there doesn’t seem to be a way out? Unless you want to take a dive and swim, there doesn’t seem to be anywhere you can go from where he is.

well, if there WAS, he would have the slight problem of all his air escaping. without, an airtight suit, he better hope he doesn’t find an exit.

Sistine Chapel…Holy Shit

:)

I’m ashamed to admit I lol-ed

Sometimes there really are no other words.

ah the coast looks like a pack of Goomys haha

I love the transition from the red ire of the fungi to the blue calm of the water(?).

The water surface is frozen, am I correct?

I fail to see how that is very bad. The thing in the water then can’t reach Mike.

If it isn’t, I guess Mike isn’t that much worse off than in the beginning. He had no idea where he is. At least he has water and air, of some sort anyway.

Don’t drink the (Martian) pool water, Mike. You don’t know what might have peed in it

Maybe Thighfriend will know what to do with all this blue stuff.

Welp, this presents a few problems.

Namely, I somehow doubt that water is empty.

But hey, at least he doesn’t need to depend on his impressively crappy helmet light anymore.

Good thing

Ihe got rid of itOn the last page Mike very much look like he is considering if somebody put something in his oxygen tank.

DEMMIT no sign of Bex’s body yet… oh, wait, look at that! It’s the MARE INTERNUM!

So… What now? swim across it? Travel through the dangerous and most likely slippery rocks around it? Time to find out if fungus friend can fly?

Whatever the case, I’m eagerly awaiting for another one of Mike’s monologues with his new friend.

I’m not sure tightfriend would be too happy to be kept underwater.

Roll credits!

Beautiful. Even Mike had to appreciate it for a beat, before reality set in.

Where’s that quote from? it sounds pretty!

I _think_ it’s from Journey to the Center of the Earth by Jules Verne. I haven’t read that book in like forever though, so I might be wrong, but Shing does seem quite fond of it.

Haha, Lorenzo is right, it’s from Journey to the Center of the Earth. I enjoyed the spirit of mystery and exploration in that story.

The “Journey” was my first thought when I saw this spectacular pages. Involuntary one looks for people in nineteen-century clothing.

It’s so good that we are back in blue again, shing!

Mike poppin’ out like an angry li’l prairie dog, love it :3

Beautiful page, Der-Shing.

Doesn’t look like he has a way down. Maybe a way up…

I wonder, too, if that “fuck” isn’t just a little bit “Well now I can’t die because I’ve gotta tell someone about this.”

That… that is beautiful :o

Holy amazewow this is beautiful! And I had almost the *exact* same reaction as Mike. :)

I am curious about the light (I know we have to wait to find out what causes it)–is the source in one localized spot, rather than present uniformly underwater? It seems like the first may be the case, since the back wall of the cavern is so much darker. Or is it just reaallllllly far away? So curious!

I love this comic, Der-shing. I love ALL your comics and have been a dedicated reader for many years. Your art is beautiful and meticulously thought out, and your storytelling and world-building skills are just frigging stunning. Thank you so much for sharing your talents with the world!

Kneefriend recommends the glowing lake water. It’s delicious!

Maybe he prefers cherry flavor over blueberry.

Fear and Self-Loathing In Mare Internum: the next cross country buddy movie! Starring Mike and Thighfriend

” There he goes. One of God’s own prototypes. A high-powered mutant of some kind never even considered for mass production. Too weird to live, and too rare to die. “

Took the chance to reread the last few pages and danggg, the flow is amazing. It’s unfortunate that the reader loses that sense of fine-tuned pacing with webcomic posting times basis, but needless to say it makes the comic even better in one go. Picking out the foreshadowing bits is also a treat. :)

Even (correctly) guessing what was going to happen -thanks to the title of the webcomic as a hint, this is such a ‘gives me chills’ moment. Can’t wait to see what’s going to happen next.

Yeah, that’d be me, too. I mean, yes, it’s gorgeous, and some of it would be that. Most of it would be “Can Thighfriend get wet? Why am I so dependent on a thighfungus anyway? Is that safe to swim in? I’m stuck here, aren’t I?”

“how am I supposed to eat ?”

Where is this thighfungus getting nutrition, anyway? Is it eating for me? Or just eating me?

Next thing you know, there will be no Mike. And all thighfriend. And then, finally, he will be happy

I hope his super-mini-space-age-recorder is still intact, otherwise who will believe Mike if he ever surfaces?

Yes, there are that unexplainable traces on his leg. Plus the simple fact of his surviving.

But science is very good in looking the other way if things do bother to much!

Whelp mikey you got your self into a fine kettle of thigh friends just think of all those friends you will be making with your bones…

and mike for someone that so wanting to die you cant seem to do that right…i mean look at the girl that few pages back she dead and your still breathing with you thigh friend…tsk

So is that water full of luminescent life? Microbes? Plankton? Some martian one that defies earthly classification? Either way, it’s probably not safe to drink.

The dialogue on this page gives me the lols. Especially when viewed as a spread page, with that whole open-mouth-no-close-the-mouth-what-would-I-say-okay-I’m-gonna-say-it-“Fuck.” thing going on.

This is really beautiful.

I’m so confused D:

So is Mike!

You know, I only discovered this recently, but I can already see your biology background. So many people would just make alien fauna look like a purple tiger, but you actually try to make the aliens look different from Earth life! With the added benefit of an awesome story, your top notch speculative evolution makes this comic a joy to read! You got a new fan!

What was he planning to do if he actually reached the surface anyway? Asphyxiate horribly?

The second panel on this half-page is narrower / has more left border than the others.

Yep, it’s a two-page spread, you can see what the entire page would look like in the comment link.

In the entire spread (comment link) the four bottom panels are each 280 px wide – except the third is ca. 272 px. It also breaks the consistency of your margins: https://40.media.tumblr.com/bdc5dfc8616679475ba25adb9a7bdcd8/tumblr_nyejkiMaFm1tk9a7zo1_400.jpg (I’ll take this down again later.)

Really intentional?

Oh, interesting. You can leave it up if you want, you got me XD

If you really want to know, the way I did this page was a bit different than most. Since I don’t know the final spine size of the future printed book, I made this spread one file with a maximum theoretical spine width inserted into the template, and the middle top image as a very very wide image that I can later trim as necessary. So my working .psd file has a huge middle border area in it that doesn’t appear in the stitched together spread I have linked. I probably grabbed too much of the border when I flattened and artificially compressed some stuff by a few px.

Anyways it’s gonna take me an annoying amount of time to tweak and resize and reupload, and I don’t think it detracts from the actual story or readability, so I won’t fix it until I have a print need… but you can bathe in the satisfaction of knowing you caught it, haha :P

Oh thanks for the insight into the process :D Glad you’re already taking into account the print layout; I do intend to get a copy down the line.

I’d rather bathe in the beauty of your art ^^ I did phrase my comment quite bluntly, but my nitpicking implicitly is of the respectfullest kind ;-)

> the future printed book

Excellent!

I love Mike’s expression of just complete and utter WAT.

Quick! Someone bring him a table!

First actual word spoken in a few pages and it is fuck.

I love this comic

The channel in the rockface at the very top of the previous panels page looks awfully like someone has carved out a pathway.

Damn, I can survive here.

This page gives me Freeman’s mind vibes.

Love this comic so far~! Idk why I’m imagining James Woods (Hades on Hercules)for Mike’s voice, but I am.